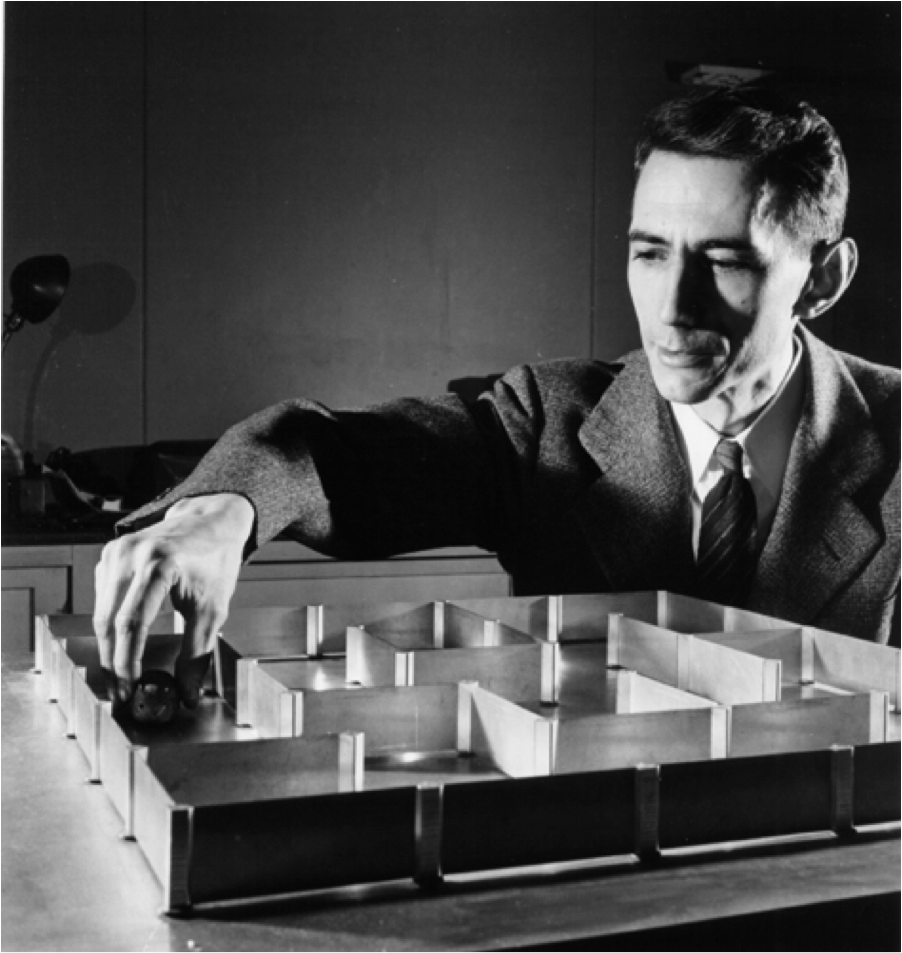

At the Macy Foundation conference on cybernetics in 1951 the inveterate inventor of information theory, Claude Shannon, shocked an assembled crowd when he debuted an electronic rat he had built. (I use the word “debut” advisedly, for this event really did resemble a theatrical debut.) Set down at the opening of a metallic grid that held a five-foot square walled maze, Shannon’s diminutive automaton, fondly christened “Rat,” proceeded to work its way through to the end. Although its movements were not smooth or graceful, and although it looked nothing like an actual maze-navigating rodent, it performed with something like aplomb. When at last it hit the “goal,” a designated sensor on the grid, it lit itself up, rang a bell, and stopped its own motors, as if in celebration. “The machine has solved the maze,” declared its inventor—who, just three years earlier, had authored for Bell Labs his Mathematical Theory of Communication, a work hailed by the end of the century as “the Magna Carta of the Information Age.”

On that day, Rat riveted a crowd of twenty-five of the nation’s foremost social, behavioral, and physical scientists. They had gathered for the eighth of ten meetings unified under the theme of “Circular and Causal Feedback Mechanisms in Biological and Social Systems.” What had them on the edge of their seats was not just Rat’s clever engineering. The maze-solving machine was in fact very simple, made of a “sensing finger” hooked up to two motors, one that operated in the east-west, the other in the north-south direction. By means of these components it could maneuver whatever configuration of the maze—for the structure had moveable walls—Shannon chose to arrange. What gripped the audience was a quality akin to pathos: Rather than watching something (say, a highly trained lab rat) simply succeed automatically in solving a maze, the audience was watching it trying, failing, testing, going awry, and finally making its way. The electronic rat and maze were one system, and the system, in an “all too human” way (in the words of one onlooker), worked. Rat learned. This struck the assembled crowd as significant. It offered a model on which all kinds of social and biological systems could be based.

This was not all, though. Having electrified the crowd, Rat went on to unnerve it. Shannon’s maze-solving machine had a second act. After Rat navigated the maze and rang its own chime, Shannon showed how easily it could fall into error. Shift a few variables, alter the parameters making the previous solution no longer tenable, and there it was running in circles in an endless loop from which it could never emerge without external input: it was stuck in a “vicious circle, … a singing condition,” and all its purported “efforts” to alter its course only made it more stuck. Audience members reached for literary equivalents: psychiatrist Henry Brosin remarked, “George Orwell … should have seen this.” “Psycho” Rat (as one participant called it) and Triumphalist Rat were alternative, two possible futures. (Two decades earlier, certain behavioral experts, precursors to cybernetics and systems theory, had expressed just such warnings about seeking a science of control: the more successful you were, the more you had to reckon with the specter of “a wild and unadaptive chaos of behavior” that would spread should something go wrong.)

The same year as Rat performed its twin futures a new paradigm in the American behavioral sciences emerged called coercive persuasion – and, I argue, these two events shared a common genesis in theories of systems. Coercive persuasion was the U.S. scientific response to the political-intellectual crisis about communist brainwashing capabilities that in the early 1950s gripped high-up levels of government and quickly spread through popular audiences. U.S. Air Force pilots appeared in newsreel footage blaming imperialism and admitting to germ-warfare missions; twenty-one American GI’s held as Korean War POWs defected to communist lands; and there appeared to be a blind spot in American individualism, which was evidently vulnerable to Manchurian-Candidate-style ideological engineering.

A fleet of behavioral scientists took on the task of studying this phenomenon, this “something new in history,” as writer Eugene Kinkead for the New Yorker ominously called it. They argued somewhat contradictorily if self-servingly that brainwashing did not exist (there was no hocus-pocus, magical way to psychologically extinguish a human being) and that, even if it did, it was not what you thought it was. Brainwashing was really nothing more than sophisticated behaviorism, the kind of thing done to rats in mazes for decades. The resulting science of coercive persuasion, sometimes also known as “forceful interrogation,” was based on viewing the prisoner within his situation as a single system, much as Rat in his electronic maze made up a system. No longer was the prisoner seen primarily as an individual exerting heroic effort – or failing mightily – against forces that threatened to break him down into a dehumanized shell of his former self. Instead, a systems approach to coercion viewed the individual-within-the-environment as a set of circulating messages. The environment could be changed – made colder or hotter, smaller or larger, louder or quieter, more or less stimulating, more or less humiliating – and the subject, like Shannon’s Rat in his ever-changing electronic maze, would surely reflect these changes, acting in turn on the environment. At last, the subject was no longer the sole possessor of his own internal life; rather, in effect, his internal life was a product of external relationships, and it existed somewhere in the interaction of self and surroundings. To put it baldly, the self was now an epiphenomenon, a mere part of an information system.

As one researcher (Dr. Robert Jay Lifton) reported, “milieu control,” the first and most powerful technique to bring about thought reform in a target, entailed foremost the fine-tuned control and circulation of messages. Another (Donald Hebb) found that sensory deprivation, brought about when environmental surroundings were completely controlled by being blocked, resulted in extreme changes in experimental subjects in very short periods of time – within hours. The inner states of such experimental subjects were no longer envisioned as “inside,” but were seen as complex information networks. After all, if a matter of a few hours in a sensory deprivation tank could result in extreme dissociation, what did this say about the autonomy of the human self? An additional behavioral expert (Dr. Louis Jolyon West) found that a process of amounting to extreme environmental manipulation had led to what was sometimes called the “ultimate demoralization” of imprisoned men: as his research team reported, “Whenever individuals show extremely selective responsiveness to only a few situational elements, or become generally unresponsive, there is a disruption of the orderliness, i.e., sequence and arrangement of experienced events, the process underlying time spanning and long-term perspective.” The result was a tragedy for the prisoner, but a research boon for the systems theorist: “By disorganizing the perception of those experiential continuities constituting the self-concept and impoverishing the basis for judging self-consistency, [extreme environmental control] affects one’s habitual ways of looking at and dealing with oneself.” When treated as information, even the self could be scrambled.

This flood of new research – on systemic demoralization—marked the arrival of cybernetics, information theory, and systems theory within projects that would previously have stood firmly in one camp, be it the conduct of war or the pursuit of basic stimulus-response psychology. During the post-World War II years, new fields not only spoke to each other, they frenziedly traded once-trademarked techniques, piggybacking on and adding to each others’ special methods; they reached across once respected aisles. It amounted to a new way of viewing what it meant to be human: now, according to brainwashing experts influenced by systems theory, the human subject was something akin to information distributed within a milieu. As mentioned above, the human being (prisoner, subject, spy) within a controlled environment (prison, reeducation camp, barracks) was no longer seen as an individual involved in a meaningful struggle. Rather, according to a cybernetic approach to “biological and social systems,” he was information that circulated within a system. According to prominent psychologist James A. Miller, avatar of a general behavior systems theory, systems are “bounded regions in space-time, involving energy interchange among their parts, which are associated in functional relationships, and with their environments.” Instead of a vision of a complex, Freudian, deep, singular self, behavioral scientists like Miller described a distributed set of relationships, ever changing, responding to new conditions and new information. Beyond that, in many cases, lay a fantasy of complete, push-button control over each designated Rat and every potential POW, over ideological enemies, spies, deviants, and even consumers. At the same time, the more experts sought systemic control, the more the system was at risk of chaotic outbursts, “singing conditions,” and unpredictable instability.

Three American POWs who were brainwashed, pictured in the Saturday Evening Post article, “The GI’s Who Fell for the Reds” (March 6, 1954). Such prisoners presented problems — and research opportunities — for U.S. behavioral scientists and systems theorists.

All told, in the postwar world, a systems approach enabled researchers to fathom what had happened, to protect against it happening to American G.I.s (it was hoped), and to engineer its use against the enemy. So emerged a program in what has been called “soft torture”: the thoroughgoing use of environmental input—manipulation of the prisoner’s sleep, temperature, clothing, body image, anxiety level, sense of dignity and ultimately sense of self – to bring about demoralization and to extract “actionable intelligence.” (Whether “good” intelligence ever emerges from such scenarios is a matter of much debate; experienced interrogators say such information is never useful.)

During the post-9/11 campaigns in Afghanistan and Iraq, these techniques reemerged, in a story that has been well told. Then Abu Ghraib happened. Abu Ghraib showed what occurs when systems of milieu control involving variables of complexity hit a glitch. It is interesting to note, however, that subsequent debate in the American public sphere was not about sophisticated interrogation procedures involving advanced environmental engineering and the combination of techniques, nor about the massive regularization and standardization of what amounted to torture – for by this point some 21,000 interrogation had been routinely performed under exacting bureaucratic standards. Instead the debate ended up revolving around whether “a few bad apples” on the one hand, or Donald Rumsfeld on the other, were to blame. By 2004, passions had come to settle on a single, spectacular technique, waterboarding. This ignored the fact that such coercion happens as part of a system (whether or not it ends up getting ‘actionable results’), and systems include within their workings the threat of behavior chaos, indeed rely on it. But unlike Rat performing in his electronic maze, which to an expert audience once clearly dramatized a vision of possible futures, most onlookers today are still not interested in seeing this.