In 2009 numerous reports of a potentially dangerous flu activated existing global systems of surveillance. As public health officials attempted to characterize the strain associated with the outbreak they turned towards a unique historical resource to help determine the scale of preparations necessary for managing a possible epidemic: freezers filled with blood.

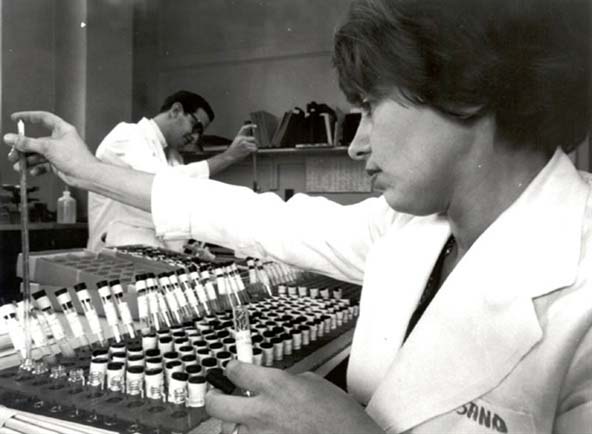

Blood serum samples from human and non-human populations collected and preserved at labs around the world years earlier were compared to the 2009 flu strain. The goal was to determine changes in the virus and to see what immunological traces it had left in the serum. Such knowledge would yield important clues as to what was in store. Had the 2009 virus evolved resistance to existing medicines? Would available vaccines afford adequate protection to those in need? The story of how old blood serum became the substrate for this kind of epidemiological sentinel system is central to understanding present-day strategies for anticipating and mitigating threats to population health.

In the early 1950s Yale polio researcher John Rodman Paul and his colleagues began to publicize an approach to tracking disease they called “serological epidemiology.” This new method represented a marriage of the techniques of the biological lab—specifically, the analysis of serum, the liquid component of blood—to the practice of epidemiology, the study of patterns of infection and immunity. To be sure, the techniques available for analyzing blood serum in the 1950s were not new. Serology had been in use by immunologists since the 19th century and physical anthropologists had been working since the early 20th century to detect and map variable genetic traits such as the abo blood groups.

However, Paul realized that once collected, such blood could be preserved and therefore made available for new uses in the future. He argued that to realize its full potential as a sentinel system, serological epidemiology required: (1) the ability to analyze and subsequently reanalyze—for purposes other than that for which they were originally collected—large numbers (hundreds of thousands) of unique blood samples and (2) the long-term, cold-storage of such specimens along with information about the persons from whom they were collected.[1]

Serological epidemiology gained momentum due to a number of factors, including improvements in technologies of cold storage such as mechanical refrigeration and liquid nitrogen; an increased recognition of the relevance of the lab to matters of public health; unprecedented access to air travel which facilitated the collection and circulation of blood samples; new computing technology for handling large amounts of data; and the creation of new international organizations, such as the World Health Organization (who), with the authority to standardize protocols to ensure that there was consistency between labs in the ways they maintained and analyzed blood.[2]

In 1958, a clutch of experts—including Paul’s collaborator at Yale, Dorothy Hortstmann—convened at the who’s headquarters in Geneva to draft a plan for implementing serological epidemiology on a global scale. Influenza weighed heavily on the minds of the participants. Many of those in attendance, including Horstmann, had lived through the deadly 1918 “Spanish Flu” pandemic. That flu—which would later be identified as an h1n1 strain—was linked to more deaths than World War I. At the time, however, little was known about the causes of influenza, let alone its biology. The participants at the Geneva meeting believed that serological epidemiology would play a critical role in attempts to harness emerging insights in biomedicine for the management of future outbreaks.

The following year, in 1959, the who published their recommendations in a report titled “Immunological and Haematological Surveys.” Pointing to the case of the 1918 flu pandemic, the authors stated:

If samples of the sera collected in these surveys are stored in such a way as to preserve antibodies, it will be possible to examine them in the future and so to determine the past history of infections as yet unknown and to follow more clearly the changing pattern of communicable diseases all over the world(WHO, 1959).

“As yet unknown” would be a familiar refrain to those who supported the creation, maintenance, and use of these accumulated frozen blood samples. The phrase alluded to the growing conviction that blood harbored traces of biological risks not yet understood by public health workers. The power of serological epidemiology stemmed from two related ways of imagining the uses of old, cold blood: to anticipate known risks and to locate the origins of unanticipated and emergent threats.

In the decades that followed, storehouses of blood grew as samples were collected from a wide range of bodies including members of communities that had recently experienced epidemics, military recruits, Peace Corps volunteers, students, immigrants, and so-called “primitive peoples.” The awareness that infections sometimes jumped from a non-human to a human host also led epidemiologists to begin collecting blood from the domesticated and wild animals that lived amidst some of these populations.

In the 1980s, frozen blood samples collected during the early years of this push to create long-term repositories were used to make a case for the African origins of hiv. Historian Edward Hooper has described how two researchers, Arno Motulsky and Moses Schanfield, re-analyzed hundreds of old specimens and found one, collected near Leopoldville in 1959—the same year that the who report on “Immunological and Haematological Surveys” was published—that tested positive on all of the then-available antibody tests associated with hiv (Hooper, 1999).

Around the same time, utilizing a dna amplification technique known as polymerase chain reaction (pcr), epidemiologists also began to incorporate genomics into their analytic repertoire. They searched old blood for fragments of dna that could and have since informed studies of infectious diseases, including h1n1, h5n1 (bird flu), and sars. Recently, some scientists have begun to mine old human blood for the dna of malaria. The hope is that this new application of genomics will yield clues about when and how the plasmodium evolved resistance to drugs that had previously been effective in preventing infection. Still other researchers are defrosting old blood samples to gain purchase on the genetics of a huge range of complex conditions, ranging from diabetes to schizophrenia.

As blood samples continue to be accumulated in freezers around the world, it is possible to say that the serological epidemiological system formalized in 1959 has realized, if not exceeded, the intentions of Paul, Horstmann, and others who anticipated the need for new strategies to manage disease risks. In 2009, for instance, serological epidemiology—which by then encompassed older immunological tests as well as new genomic ones—helped public health workers to determine that “swine flu” would not be as deadly as the 1918 flu.

At the same time, certain people whose blood is stored as part of this surveillance system have sought to have it removed from the ongoing and increasingly lucrative enterprise of revealing new forms of embodied risk. In the decades since the first round of serological epidemiological collections were assembled, blood—including some that was collected under the auspices of who’s protocols—has been at the center of potent debates about patenting the body, the racialization of genomic medicine, and abuses of human research subjects. Some communities, such as the Havasupai in Arizona and the Yanomami in the Amazon, have demanded that their blood be removed from biomedicine’s freezers. In doing so, they have demonstrated that new uses for old blood have been accompanied by new forms of exclusion or injury. In other words, though scientists initially understood this blood to be frozen, they have discovered that attitudes about the ends to which it can and should be put are far from fixed.

While these kinds of biosocial harms were not among those anticipated by the architects of serological epidemiology, they should not be faulted for failing to accurately predict the future. The system of surveillance they forged was designed to accommodate known unknowns: the likely emergence of new viruses. Looking forward to great advances in biomedicine, they did not consider that those advances would inevitably be accompanied by new ideas about what it means to be a subject of biomedical research. It is in this sense that present-day debates around the appropriate uses of old frozen blood have come to serve as a sentinel of emerging problems of ethics as well as of epidemiology.